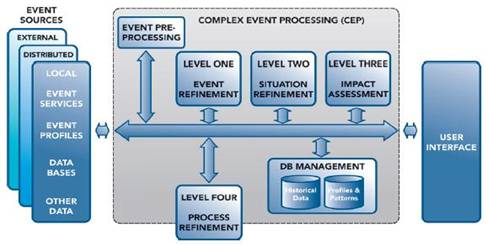

In an earlier blog entry, What is Complex Event Processing? (Part 1), we introduced a functional reference architecture for event processing. Now, we discuss another important component of distributed CEP architectures, event preprocessing.

Event preprocessing is a general functionality that describes normalizing data in preparation for upstream, â€higher level,†event processing. Event preprocessing, referred to as Level 0 Preprocessing in the JDL model (see figure below), is often referred to as data normalization, validation, prefiltering and basic feature extraction. When required, event preprocessing is often the first step in a distributed, heterogeneous complex event processing solution.

As an illustrative example, visualize a high performance network service that passively captures all inbound and outbound network traffic from a web server farm of 300 e-commerce web servers. We must first normalize the network capture data so that it can be further processed. How to you extract HTTP session information from an encrypted click stream in real time? What information do you forward as an event? Do you just send HTTP header information or other key attributes of the payload? Do you strip out the HTML images files; or do you replace them with the image metadata? These are examples of important questions that must be considered in a web-based event processing application.

In another example, we are building a network management related CEP application and will be correlating events using a stateful, high-speed rules engine. The event sources, for example, are SNMP traps and log file data from two network applications. How do we normalize (transform) the data for event processing? How much filtering is performed at the data source versus at the upstream event processing agent?

Heterogeneous, distributed event processing applications normally require some type of event preprocessing for data normalization, validation and transformation. Some simple applications, for example self-contained processing of well formatted homogeneous streaming market data, require very little preprocessing. However, most classes of complex event processing problems require the correlation and analysis of events from different event sources. BTW, this is a major difference between true CEP classes of problems and event stream processing (ESP) classes of problems. I will discuss this in more detail in a later blog entry.

Often our customers at TIBCO use our BusinessWorks® product to prefilter, normalize and transform raw data into JMS or TIBCO Rendezvous® messages. What is important to remember is that raw data must be transformed (normalized), securely transmitted as an electronic message across the network and formatted in a manner that optimizes event processing throughput.