Complex event processing (CEP) is an emerging network technology that creates actionable, situational knowledge from distributed message-based systems, databases and applications in real time or near real time. CEP can provide an organization with the capability to define, manage and predict events, situations, exceptional conditions, opportunities and threats in complex, heterogeneous networks. Many have said that advancements in CEP will help advance the state-of-the-art in end-to-end visibility for operational situational awareness in many business scenarios. These scenarios range from network management to business optimization, resulting in enhanced situational knowledge, increased business agility, and the ability to more accurately (and rapidly) sense, detect and respond to business events and situations.

Possibly, one of the easiest ways to understand CEP is to examine the way we, and in particular our minds, interoperate within our world. To facilitate a common understanding, we represent the analogs between the mind and CEP in a table:

|

Human Body |

Complex Event Processing |

Functionality |

|

Senses |

Transactions, log files, edge processing, edge detection algorithms, sensors |

Direct interaction with environment, provides information about environment |

|

Nervous System |

Enterprise service bus (ESB), information bus, digital nervous system |

Transmits information between sensors and processors |

|

Brain |

Rules engines, neural networks, Bayesian networks, analytics, data and semantic rules |

Processes sensory information, “makes sense†of environment, formulates situational context, relates current situation to historical information and past experiences, formulates responses and actions |

Table 1: Human Cognitive Functions and CEP Functionality

In a manner of speaking, CEP is a technology for extracting higher level knowledge from situational information abstracted from processing business-sensory information. Business-sensory information is represented in CEP as event data, or event attributes, transmitted as messages over a digital nervous system, such an electronic messaging infrastructure.

In order to effective create an processing environment that can sustain CEP operations, the electronic messaging infrastructure should be capable of one-to-one, one-to-many, many-to-one, and many-to-many communications. In some CEP application, a queuing system architecture may be desirable. In other CEP application, a topic-based publish and subscribe architecture may be required. The architect’s choice of messaging infrastructure design patterns depends on a number of factors that will be discussed later in the blog.

Deploying a messaging infrastructure is the heart of building an event-driven architecture (EDA). It follows an EDA is a core requirement for most CEP applications. It is also safe to say that organizations who have funded and deployed a robust high-speed messaging infrastructure, such as TIBCO Rendevouz® or TIBCO Enterprise Messaging System® (EMS) will find building CEP applications easier than organizations who have not yet deployed an ESB.

When an organization has substaintiated an EDA and event-enabled their business-sensory information, they can consider deploying CEP functionality in the form of high-speed rules engines, neural networks, Bayesian networks, and other analytical models. With modern rules engines, organizations can take advantage of powerful declarative programming models to optimize business problems, detect opportunity or threats, improve operational efficiency and more. If the business solution requires statistical models such as likelihood, confidence and probability, event are processed with mathematical models such as Bayesian networks, neural networks or Dempster-Shafer methods, to name a few.

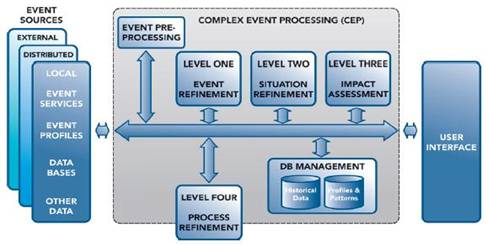

Figure 1. The JDL Model for Multisensor Data Fusion Applied to CEP

Solving complex, distributed problems requires a functional reference architecture to help organizations understand and organize the system requirements. Figure 1 represents such an architecture. The Joint Directors of Laboratories (JDL) multisensor data fusion architecture was derived from working applications of blackboard architectures in AI. This model has proven to be reliably applicable to detection theory where patterns and signatures, discovered by abductive and inductive reasoning processing, have been the dominant functional data fusion model for decades.[i] Vivek Ranadivé, TIBCO’s founder and CEO, indirectly refers to this model when he discusses how real-time operational visibility, in the context of knowledge from historical data, is the foundation for Predictive Business®.[ii]

In part 2 of What is Complex Event Processing, I will begin to describe each block of our functional reference architecture for complex event processing. [iii] In addition, I will apply this reference architecture to a wide range of CEP classes of business problems and use cases. I will also address the confusion in terminology and the differences between CEP and ESP (event stream processing), here on the TIBCO CEP blog, in the coming weeks and months.