Data science and analytics are the new road to a successful and competitive business model. Analytics is now universally accepted as a core tenet for businesses that want to operate in a more efficient and optimized future. But inherent in this race is the fact that the way is long and mired with obscured language, capabilities, technologies, and a world awash in data. Further, technical industries like petroleum and petrochemical require substantial domain knowledge to effectively leverage the diverse data sets.

I believe the best data science in oil and gas is one that grows from sound engineering and scientific principles incorporating visual and interactive components that allow the practitioner to touch and feel the data and insights. This approach has worked well in fields where data science is well-adopted like computational biology. Because oil and gas companies need to efficiently produce and process more hydrocarbons, data science must leverage the insights already discovered through canonical petroleum, facilities, and drilling to make the best impact. Otherwise, data science solutions are at risk to “rediscover” correlations and predictors that arise from the inherent mechanics and processes underlying the oil and gas industry. By connecting data science to core engineering principles you also improve the ability for the end user to trust the advanced methodologies at work in the solution. Through rapid experimentation, they can begin to understand how the solution behaves and thinks.

At the end of the day, data science is a numbers game. Business and engineering decisions are made continuously, regardless of whether or not they are informed. For a business decision to become data driven, a relevant solution must be accessible, trusted, and easy to use. And the information that will provide the right direction must be assembled quickly, efficiently, clearly, helping to define that purpose, that end game goal.

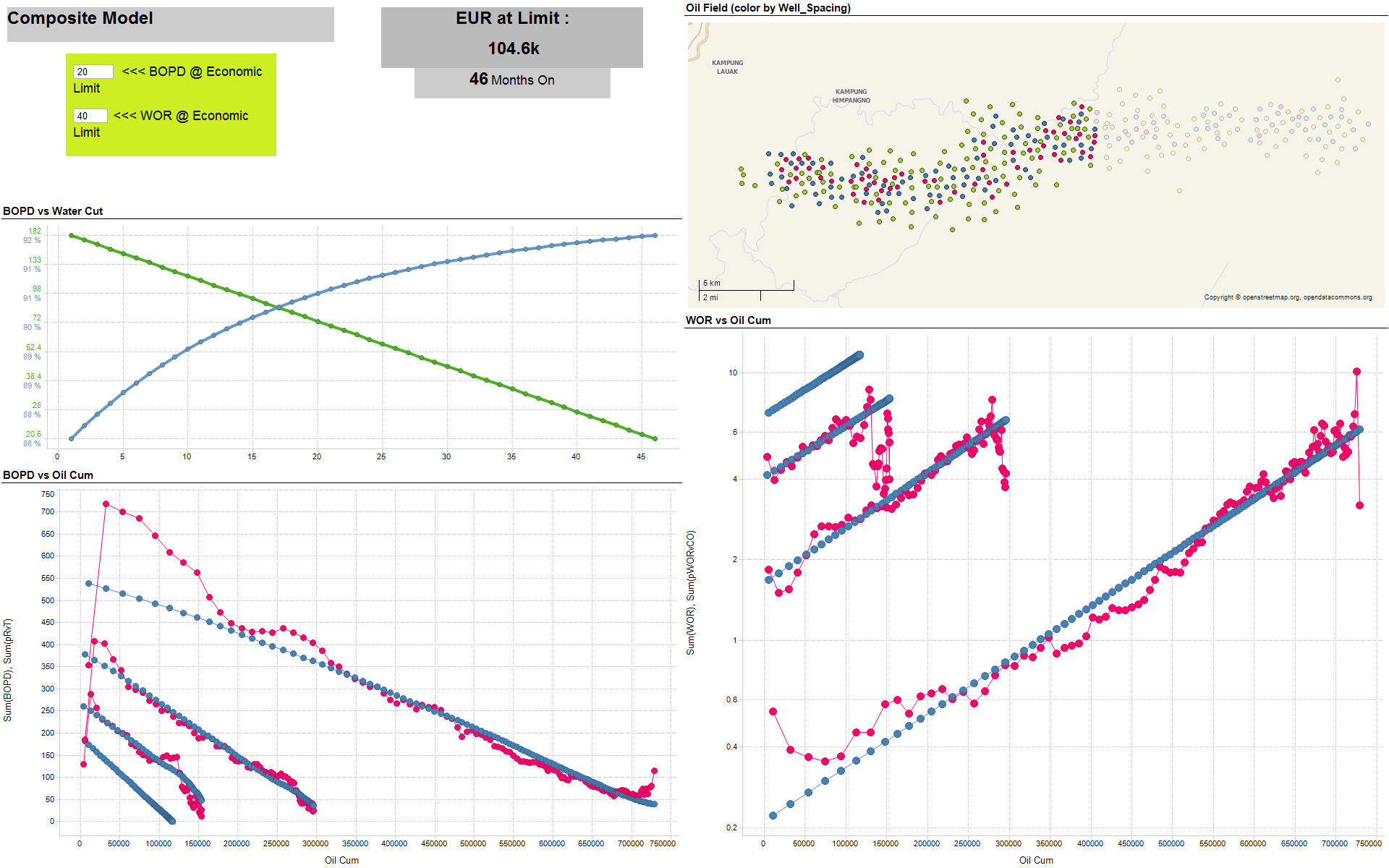

We, at Ruths.ai recently completed a data science solution package for a client acting as NOJV partner to a large water flood – that in the end provided over $120MM in value capture over three years and greatly improved the influence over the operator. The solution itself was comprised of several analytic modules that targeted due-diligence, engineering, and strategic decisions. The bedrock of the solution was a reservoir monitoring and surveillance dashboard that integrated diverse data sets including well header, production, drilling and completion, and geodynamics. This was the first component that built trust with the user and allowed us to understand the key pain points of the asset. The data itself was sourced from systems of records and engineer desk files.

During the lifetime of the asset, tactical data science analyses were added to handle high-value issues including artificial lift performance, stimulation candidate optimization, improving drill rig efficiency, power utilization, drilling package performance, lookback analysis, and infill drilling. These solutions leveraged common techniques like analog, offset, or type curve analysis, but also applied core data science methodologies like signal processing, clustering, linear optimization, multivariate regression, and dimensionality reduction. Because we established a strong relationship with the data, engineers, and asset, we were able to deliver these tactical analytics within weeks. They became sophisticated, interactive tools that the engineers and decision makers could revisit and use when similar problems arose or to better understand their historical decisions.

Implementing good data science is as much a cultural challenge as it is a technological one. Only by realigning thought and workflows to be empirically-driven will companies experience needle-changing results. When leveraged correctly, this new data science culture, driven by domain expertise, can empower an organization with optimization and adaptability that catalyzes success against competitive and volatile market forces.

Credits: Ruths.ai is a strategic partner of TIBCO and a platinum sponsor at the Energy Forum in Houston, Sept 1-2.