At TIBCO NOW last week, I had the pleasure of announcing and demonstrating native machine learning capabilities, directly within Flogo Flows at the edge, directly on device without any networking requirements—all of the inferencing and aggregation is happening on device! What does this mean? Well, in some cases, it can lower operational costs up to 85% and users can begin to look at implementing use cases that were not possible before due to the potential latency and network traffic required to stream and run models in the cloud. This is truly the next phase of IoT edge computing!

Last week, we launched with support for Google TensorFlow and TIBCO Statistica. Let’s break this down a bit. First and foremost, with TensorFlow you’ll use Python to write your training script and export the saved model. Flogo supports the use the higher-level TensorFlow tf.estimator API to abstract away the low-level coding of the graph itself. The tf.estimator package exposes a model export method from Python, which will export the trained model protobuf (the file format used by TF) to a directory, that can be zipped up and passed directly to Flogo for native inferencing. Flogo takes the protobuf zip file (or path), decompresses, and executes within the Golang runtime via a CGO wrapping for the dynamically linked libtensorflow.so. This means that you don’t need Python on the edge device nor do you need to have anything installed from TensorFlow, other than the OS-specific dynamic library that is linked at runtime.

From within Flogo, you simply add the Flogo ML Inferencing activity, where you specify the location to the compressed model file (or path itself). You’ll also need to configure the activity with a few specifics—the model location (a path on the edge device or a zip file location), the input tensor name, and finally, a collection of features that are used to populate the graph for inferencing. An additional field titled ‘framework’ must be specified. Currently, ‘Tensorflow’ is the only available option, however, the activity has been developed with an extensible contribution model enabling additional backend deep learning frameworks to be plugged in and the activity will work just as it does today.

The output of the activity is a complex object containing a collection of results. Let’s go ahead and take an example. At TIBCO NOW in San Diego, I demonstrated the capabilities via a Raspberry Pi, with a Flogo Flow and DNN model built in TensorFlow, to do classification of data from an accelerometer. The Flogo Flow aggregated the data over a period of 50ms and collected 11 aggregated results, and every 500ms passed this data to the TensorFlow model for scoring. The output of the TensorFlow model are the various classifications (walking, jogging, and standing) along with the probabilities of each. The results were delivered in ~60ms with >99% accuracy!

The demo along with the Python training code and sample training data sets will be made available on the Flogo GitHub page

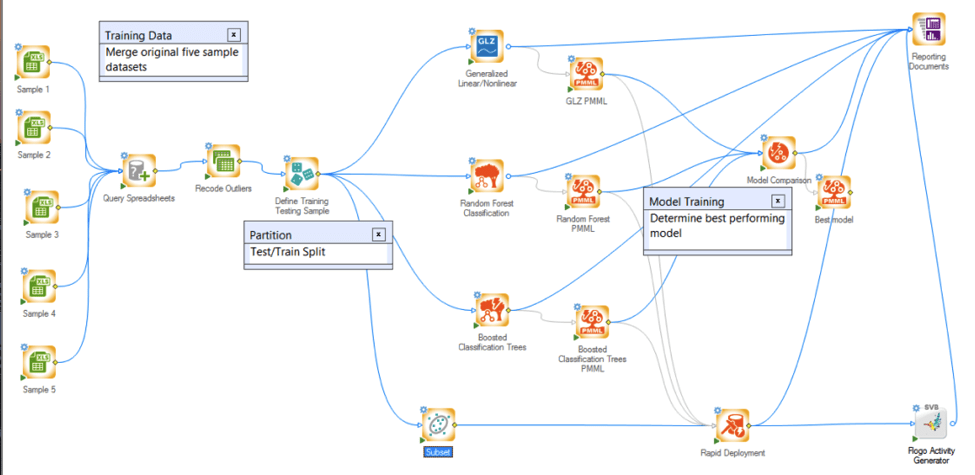

Okay, let’s take a look at the TIBCO Statistica support now, shall we? TIBCO Statistica provides a wide variety of comprehensive stats and machine learning capabilities in a visual workflow. To put it another way, much of what you’re doing in Python code to leverage TensorFlow, would be done graphically within the Statistica UI. Statistica is a powerful tool that has developed over time. What I want to focus on is the new addition to natively export models as Flogo Activities.

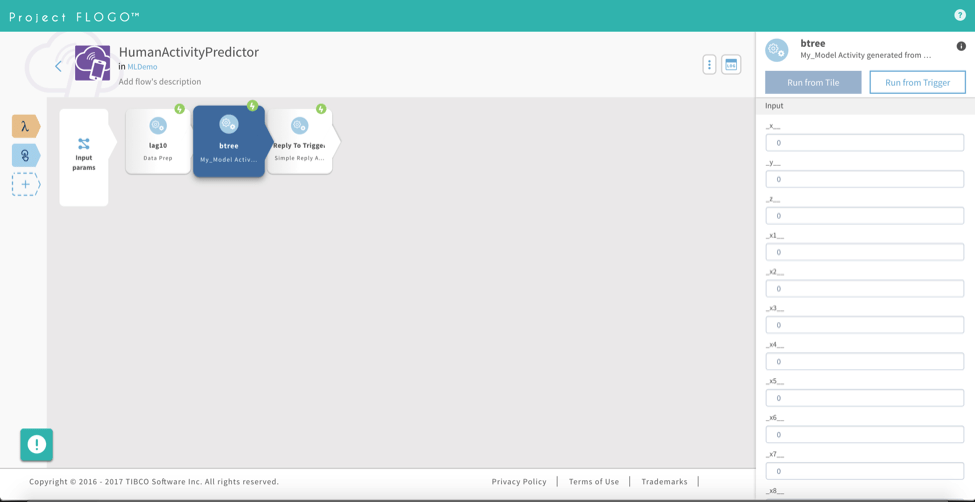

The export to Flogo will produce all of the Golang code and the JSON metadata data required by Flogo to execute the model directly within a Flogo Flow. You’ll just need to take the exported bundle from Statistica and place it within a git repo and install in Flogo WebUI (or manually add the ref to your Flogo app JSON). Then, just send the device data to this activity for inferencing. See the screenshot below of a Statistica produced Flogo Activity within Flogo WebUI.

More details to come, including a catalog of sample TensorFlow and Statistica models, tutorials, and documentation. Check the Flogo GitHub page regularly for updates (and of course share your feedback via GitHub issues)!