Last year, TIBCO announced the Open Source Project Flogo at TIBCO NOW in Las Vegas. In October 2016, the project was published as first developer preview. You can reach the project via

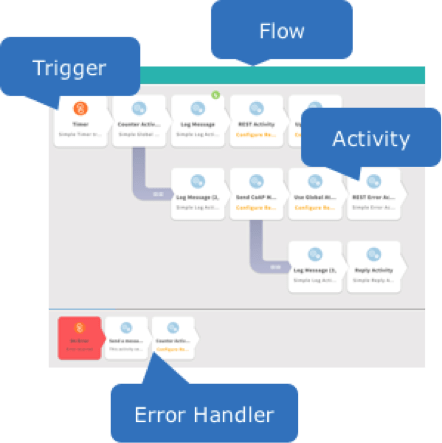

This blog post demonstrates how build a custom Flogo adapter or connector quickly and easily for any kind of technology or interface. In Flogo terms, this is either a Trigger (to initiate and start a new Flogo flow from an interface) or an Activity (to send a message to an interface). The following picture shows the basic Flogo concepts:

Continue reading to learn how to build a Flogo Activity to send messages to Apache Kafka. Note that building a Trigger can be done with the same procedure as described here.

Apache Kafka and other IoT technologies

Apache Kafka is an open source framework used for building real-time data pipelines and streaming apps. It is horizontally scalable, fault-tolerant, wicked fast, and runs in production in thousands of companies. You can publish and subscribe to streams of data, process streams in real time, and store streams of data in a distributed cluster.

There are many other technologies on the market for communication in Internet of Things scenarios. For example, you might use TIBCO FTL instead of Apache Kafka, which is a robust, high performance messaging middleware platform for real-time, high-throughput data distribution to virtually any device, and differentiates from Apache Kafka through enterprise features such as security and access control or easy setup of fault tolerance and guaranteed delivery.

From Flogo perspective, it is easy to build your own connectors for various standards, technologies, and products one time, and then reuse them in your IoT integration flows. This is very important as we already see plenty of different technologies in the IoT world which have to communicate with each other.

Development of a custom Flogo adapter

Good news first: Even if you do not have much or even any experience with Go programming language, it is very easy to develop own adapters. As Go is simple and intuitive (but still powerful), you can learn it by doing (as I do).

I followed the following steps to realize an Apache Kafka Activity for Flogo:

- Get access to a technology which you want to integrate

- For Kafka, this could be a locally installed broker, or a remote connection

- Find, install, and use a corresponding Go library

- Various Golang APIs are available for Apache Kafka

- I chose the Kafka API from optiopay because it is very simply and easy to use – depending on requirements regarding functionality, performance or maturity, other options might be better

- Install the library into your Go workspace (command ‘go install’)

- Run a “hello world“ example (optiopay delivers a very nice simple demo where you send and consume messages from command line)

- Duplicate an existing Flogo Activity

- Choose a similar Flogo Activity (e.g. Kafka is similar to MQTT, you connect to a broker and send messages)

- The folder includes implementation, test, runtime config, and UI config

- Use the existing folder as template and re-write / update / replace / enhance the code + config + test

- Run “go test” to validate if the new connector works as expected

- In my Kafka test, I see that the test sends a new message to Kafka (verified in a Kafka consumer running on the command line)

- Deploy and test the new connector in the Flogo Web UI

- The Flogo Web UI allows to install a new activity (or trigger) via URL Link (you need to publish your connector on Github)

- Github pull request to contribute your code back to the Flogo community

- (optional step, but highly recommended J)

Source code and video recording of building the Apache Kafka Activity for Flogo

Here is the source code on Github: Apache Kafka Activity for Flogo. The following video shows how to develop, test, and deploy the Apache Kafka adapter:

Any feedback or questions are highly appreciated. Please use the Flogo Community Q&A to ask questions or discuss concepts or use cases for Project Flogo.